What is the SafeKit Farm NLB solution for Windows/Linux?

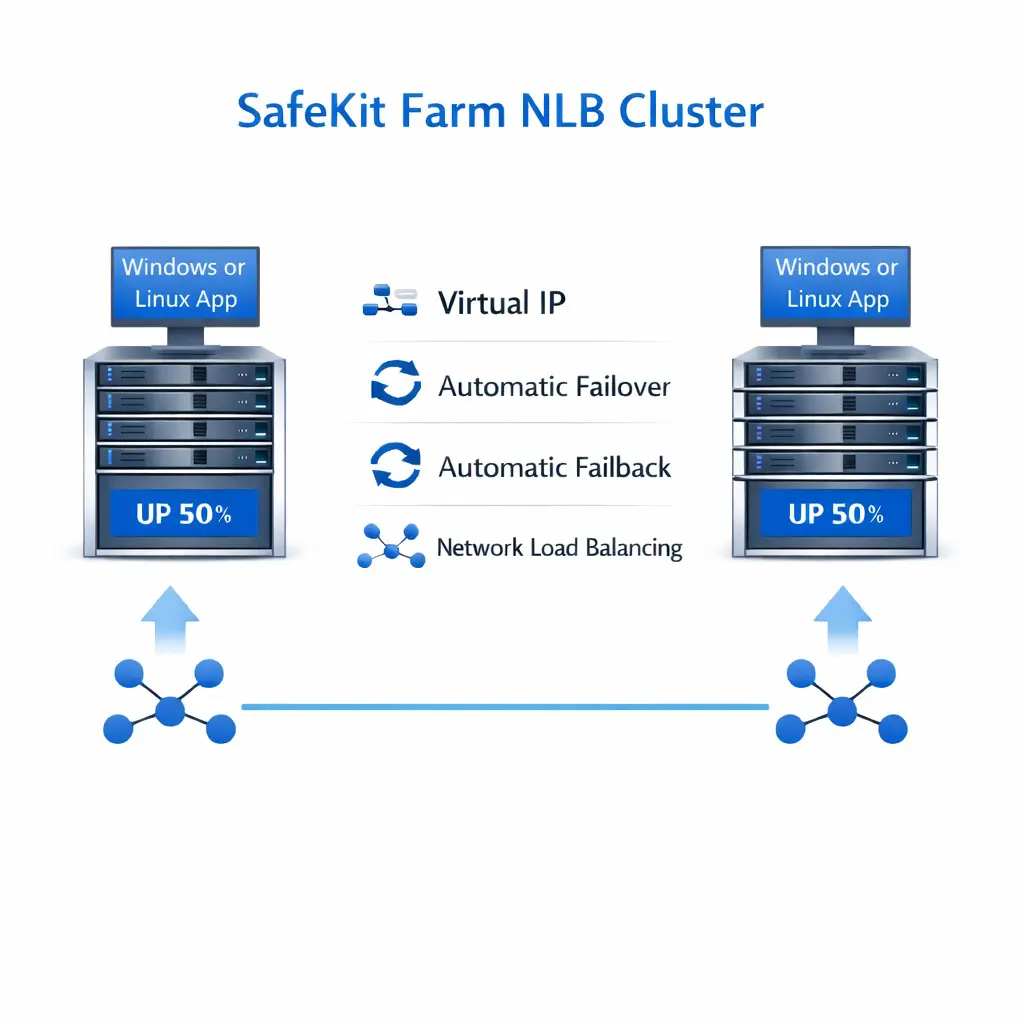

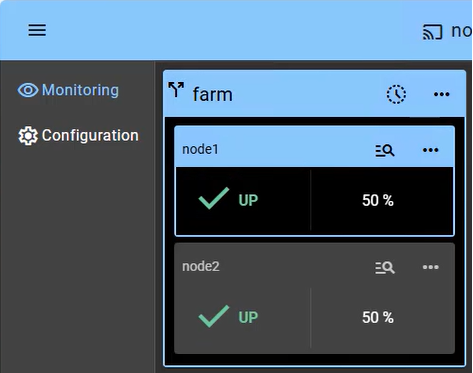

SafeKit provides network load balancing and high availability to Windows/Linux across two or more servers.

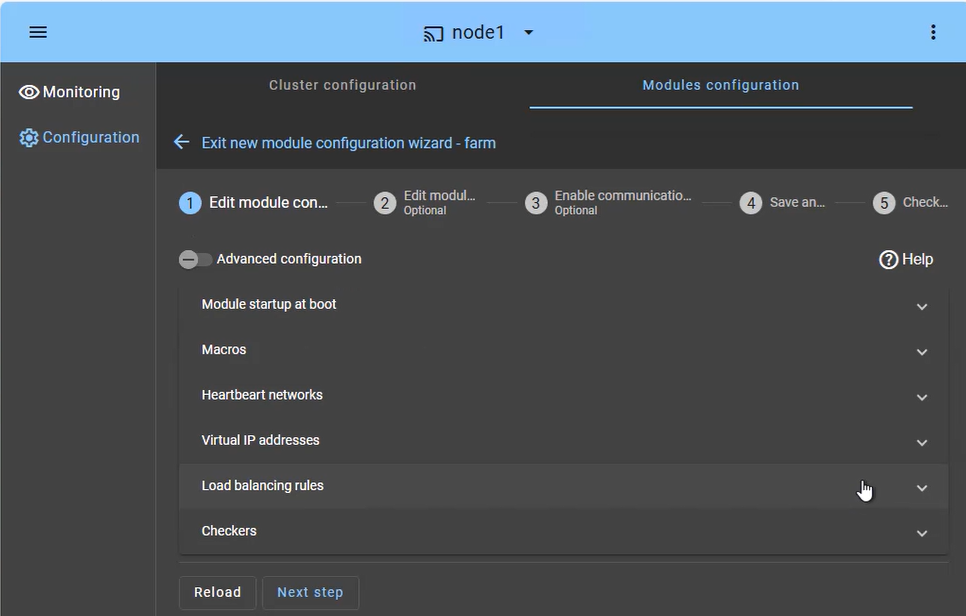

This article explains how to quickly implement a Windows/Linux cluster without hardware load balancers or specialized networking skills.

The solution works by defining a virtual IP with load balancing rules, the Windows/Linux service names, and health checkers.

SafeKit then enables network load balancing and automatic failover to ensure scalability and continuous service availability.