What is the SafeKit HA solution for Bosch AMS?

SafeKit brings high availability to Bosch AMS between two servers of any brand.

This article explains how to implement quickly a Bosch AMS cluster without shared storage on a SAN and without specific skills.

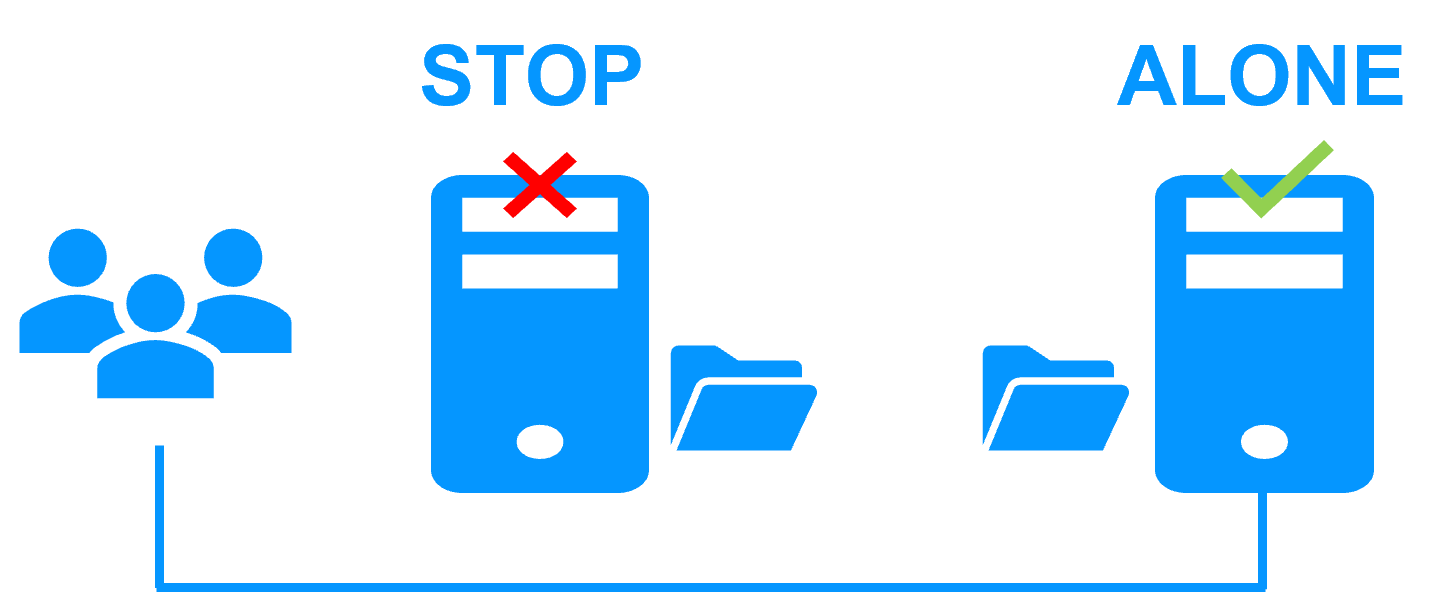

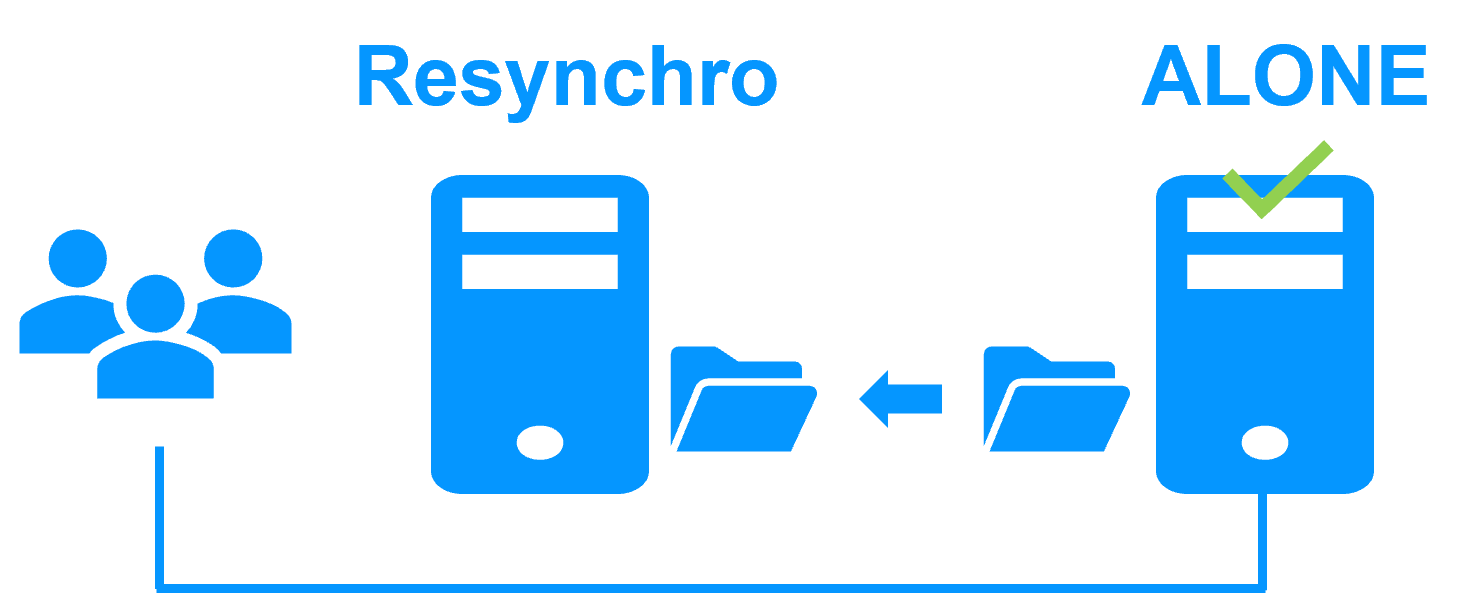

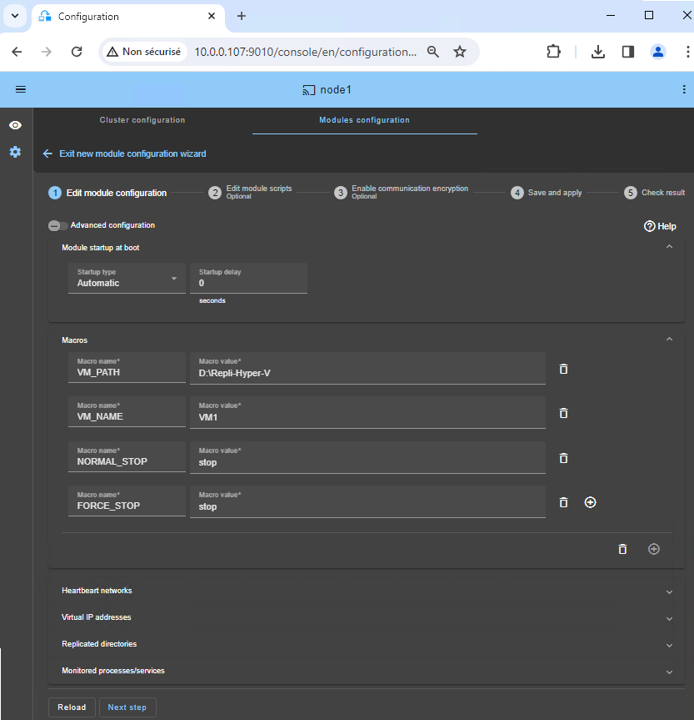

The principle of the solution is to put Bosch AMS in a virtual machine under Hyper-V. SafeKit implements real-time replication and automatic failover of the virtual machine.

Note that Hyper-V is the free hypervisor included in all Windows versions (even Windows for PC).