High Availability Architectures and Best Practices

Evidian SafeKit

Overview

This article explores the different high availability architectures and the best practices by given the pros and cons of each architecture.

The following comparative tables explain in detail the SafeKit high availability architecture and its best practices (SafeKit is a software high availability product).

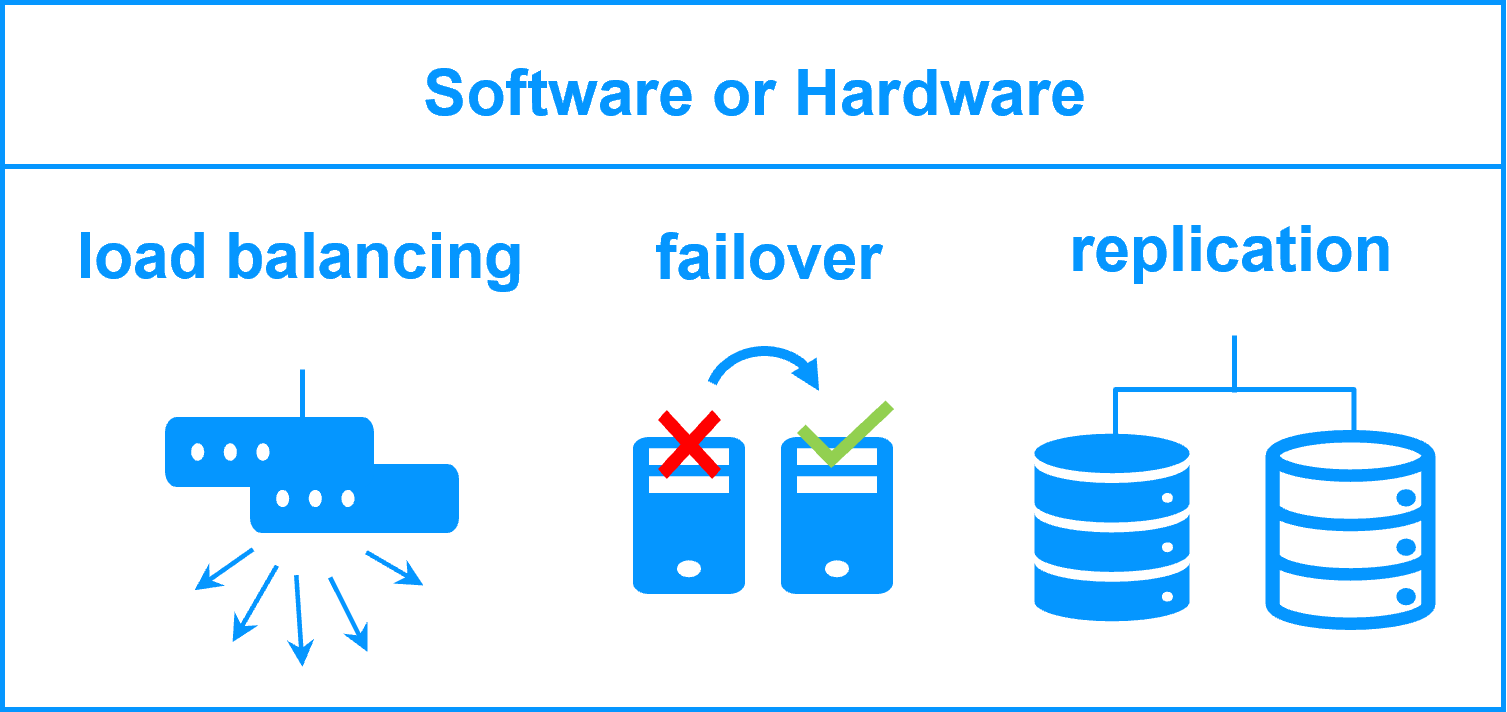

What are the high availability architectures?

There are two types of high availability architectures: those for backend applications such as databases and those for frontend applications such as web services.

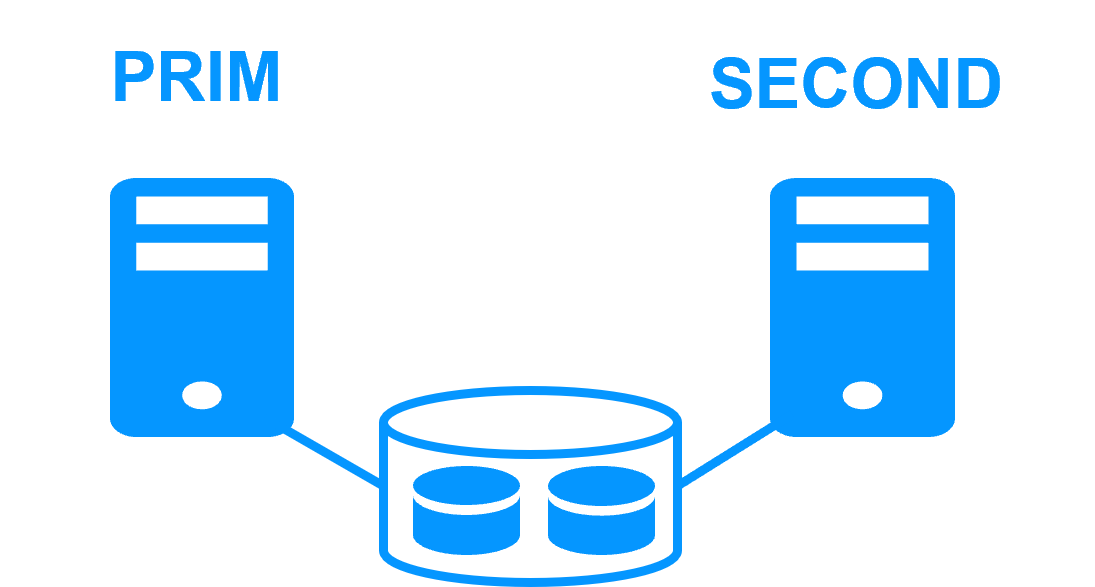

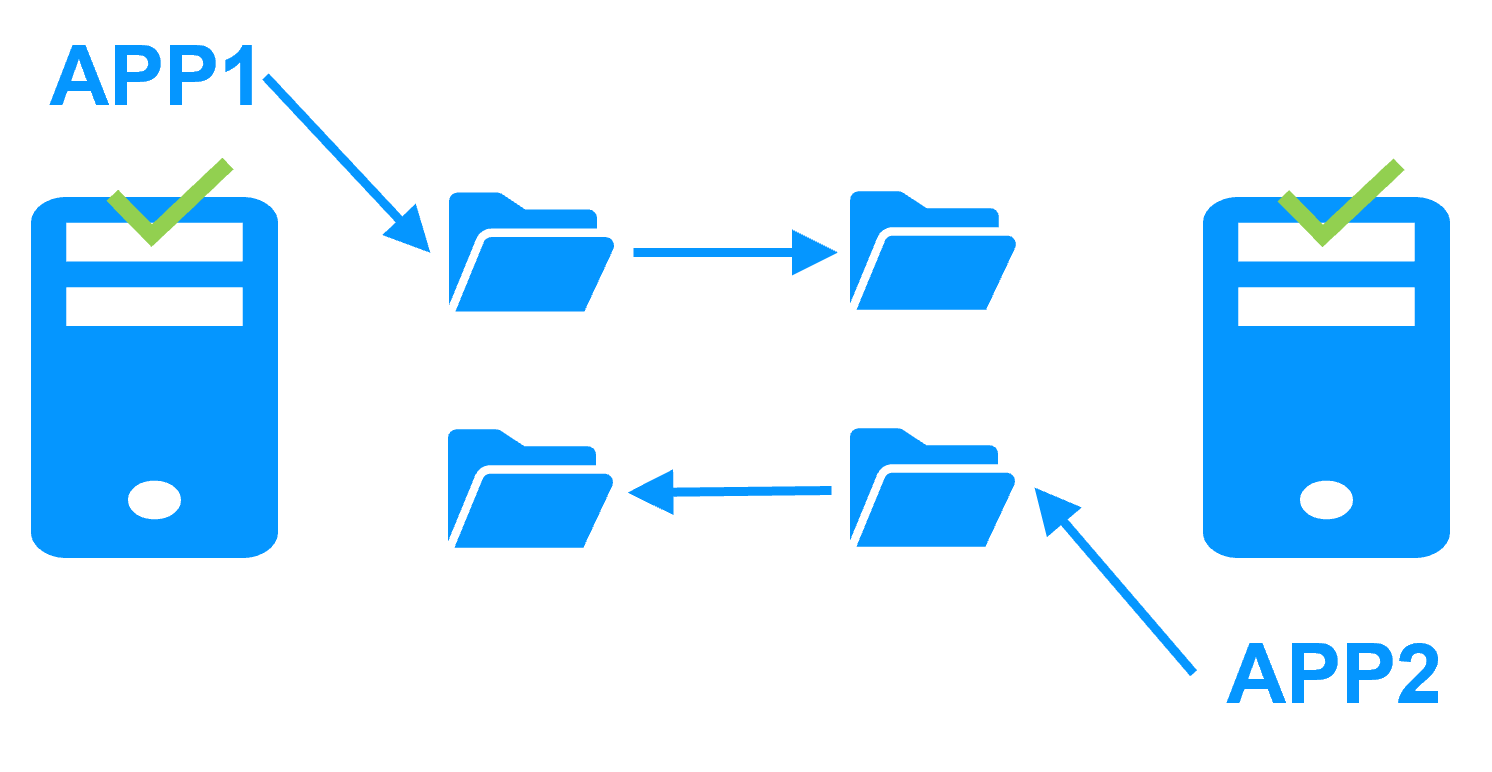

High availability architectures for backend are based on 2 servers sharing or replicating data with an automatic application failover in the event of hardware of software failures.

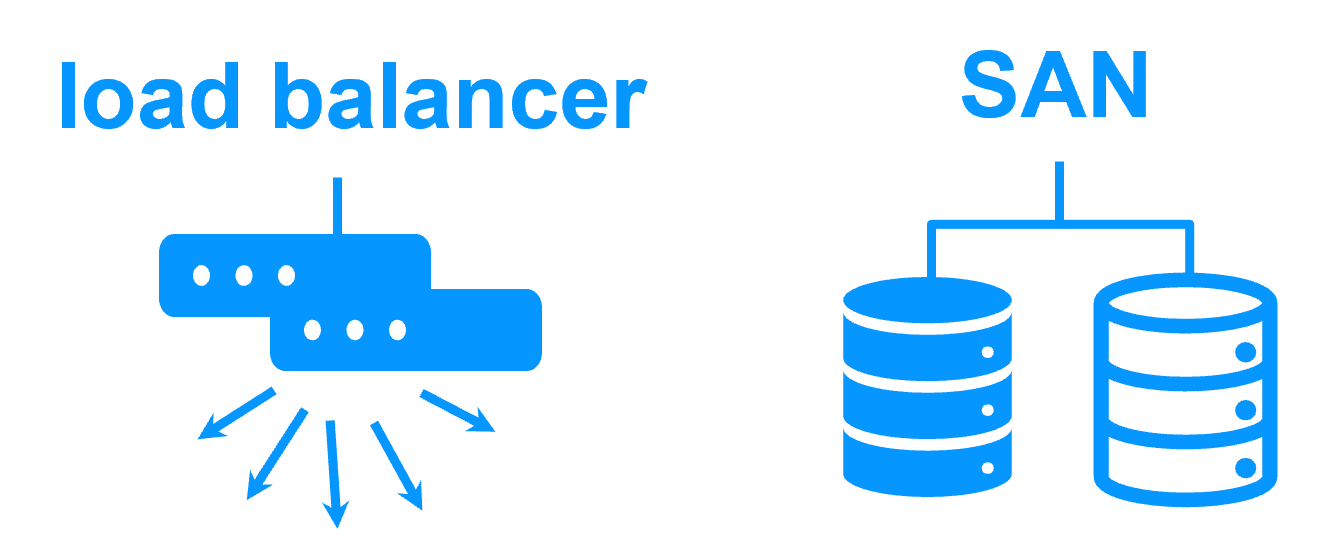

High availability architectures for frontend are based on a farm of servers (2 servers or more). The load balancing is made by hardware or software and distributes the TCP sessions to the available servers in the farm.

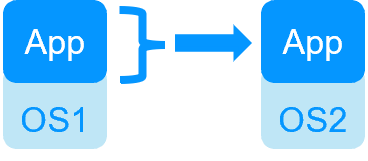

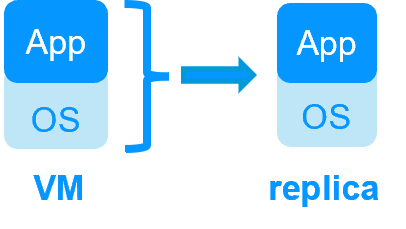

Moreover, you have to choose between high availability at the application level or at the virtual machine level.

What are the best practices?

This article explores the best practices in high availability architectures by comparing:

- software vs hardware clustering,

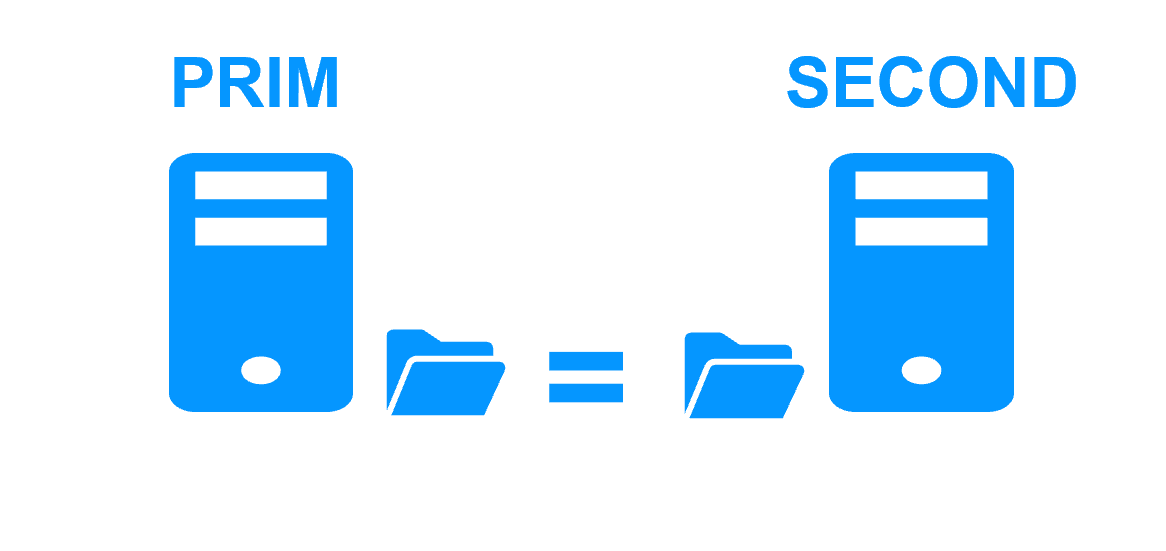

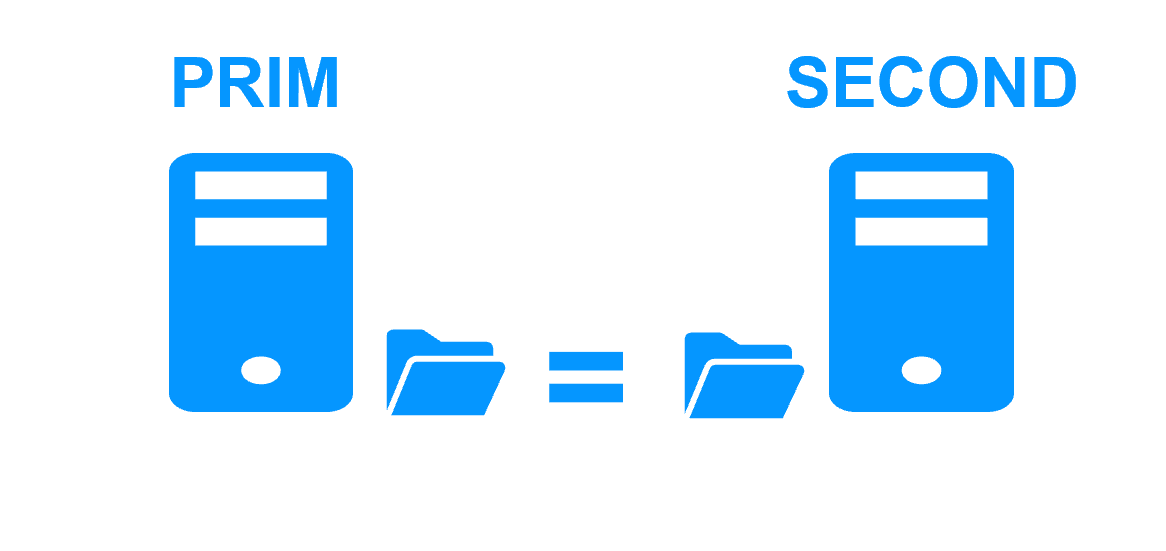

- shared nothing vs shared disk architecture,

- application vs virtual machine high availability,

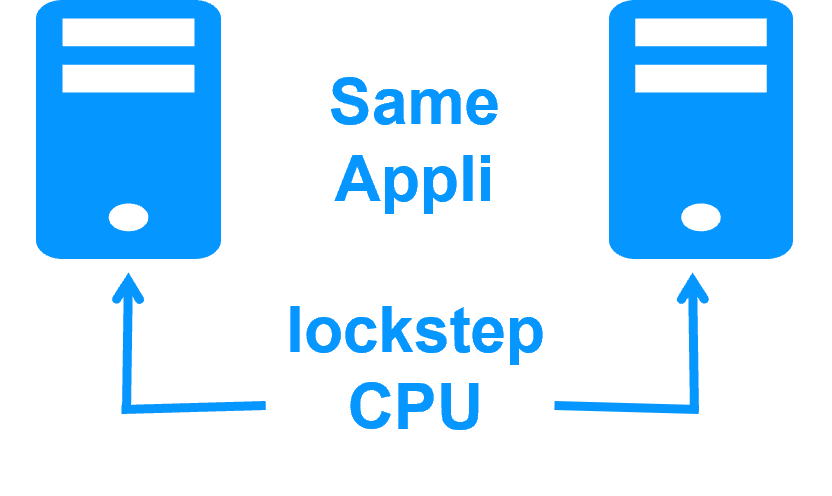

- high availability vs fault tolerance,

- synchronous vs asynchronous replication,

- file vs disk replication,

- data replication techniques,

- RPO and RTO with examples,

- split brain ans quorum,

- virtual IP addresses.

Software clustering vs hardware clustering

|

|

|

|

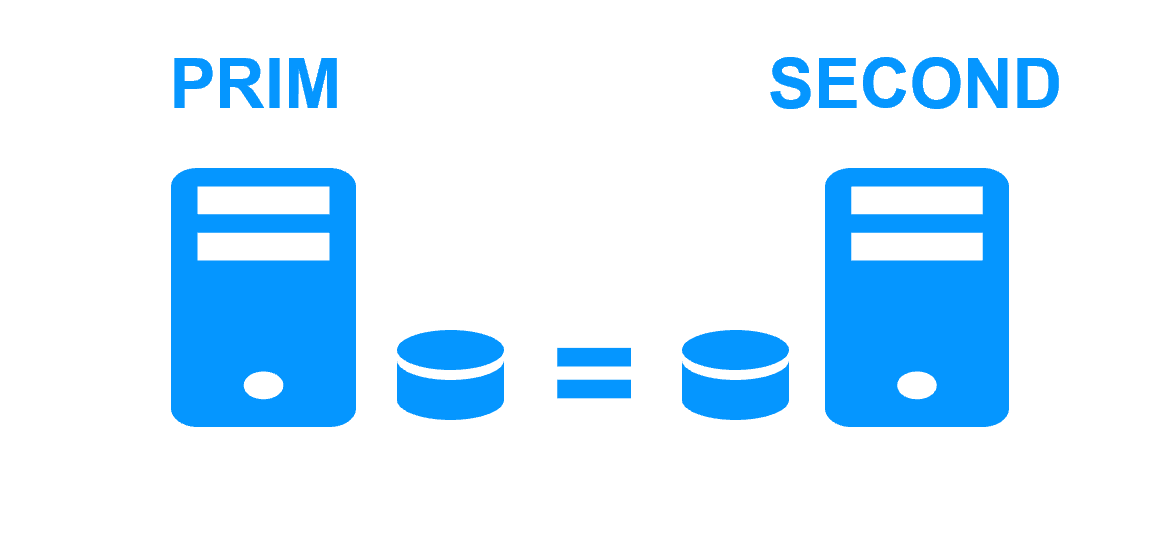

Shared nothing vs a shared disk cluster |

|

|

|

Application High Availability vs Full Virtual Machine High Availability

|

|

|

|

High availability vs fault tolerance

|

|

|

|

Synchronous replication vs asynchronous replication

|

|

|

|

Byte-level file replication vs block-level disk replication

|

|

|

|

Heartbeat, failover and quorum to avoid 2 master nodes

|

|

|

|

Virtual IP address primary/secondary, network load balancing, failover

|

|

|

|

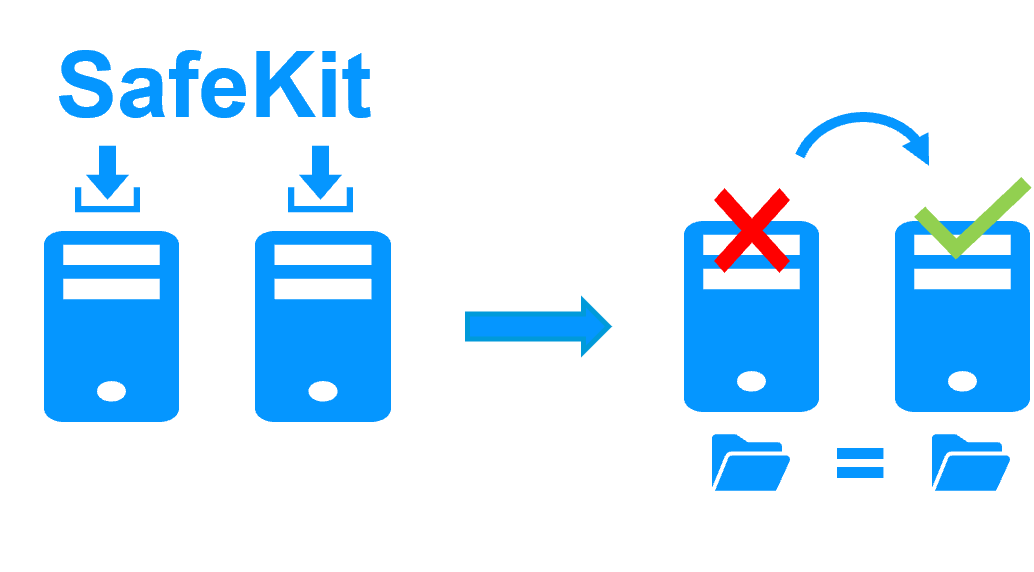

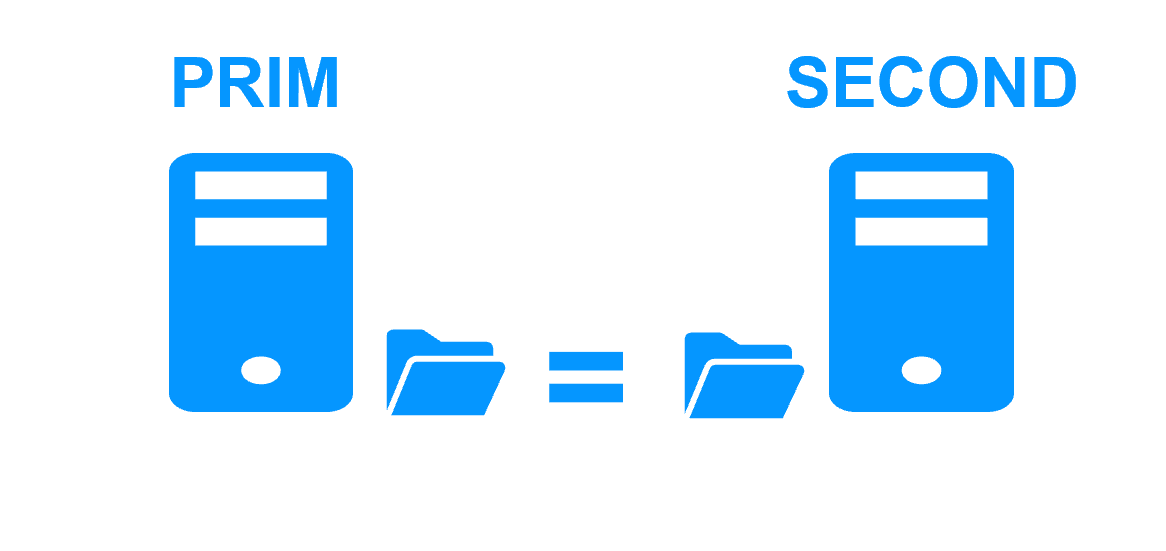

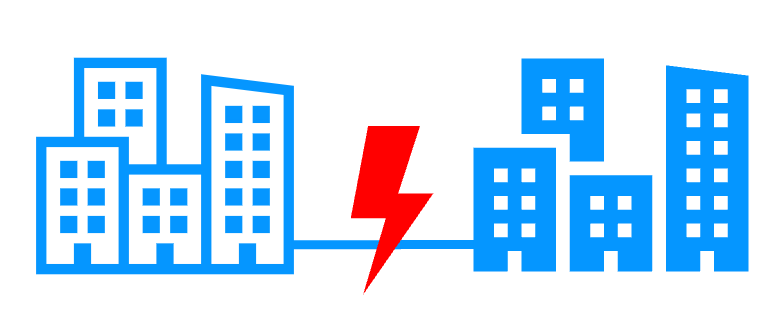

Evidian SafeKit mirror cluster with real-time file replication and failover |

|

|

3 products in 1 More info >  |

|

|

Very simple configuration More info >  |

|

|

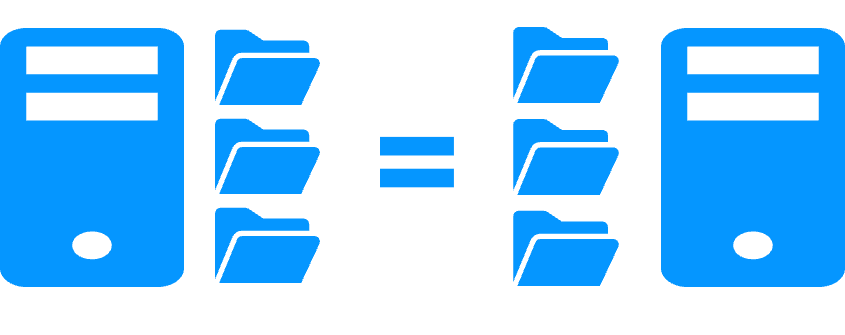

Synchronous replication More info >  |

|

|

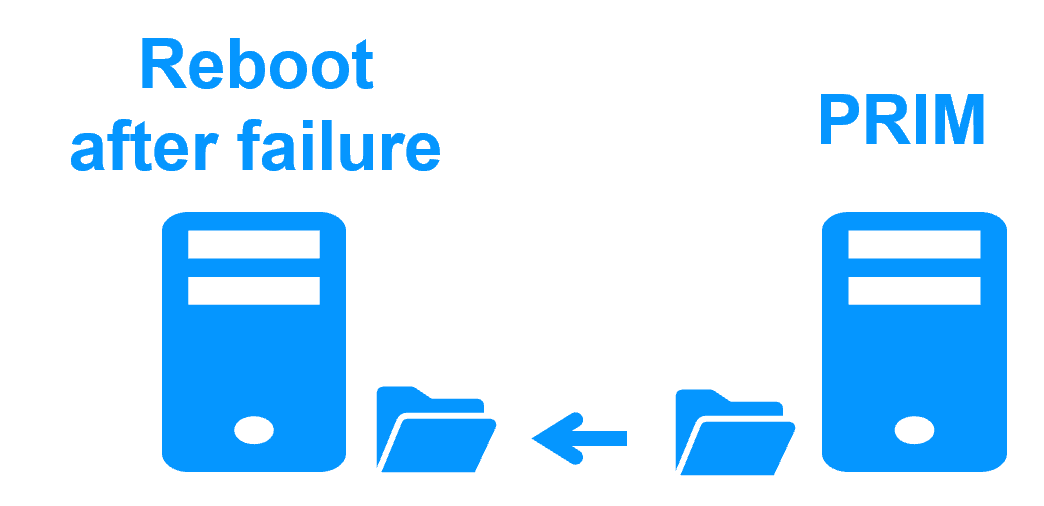

Fully automated failback More info >  |

|

|

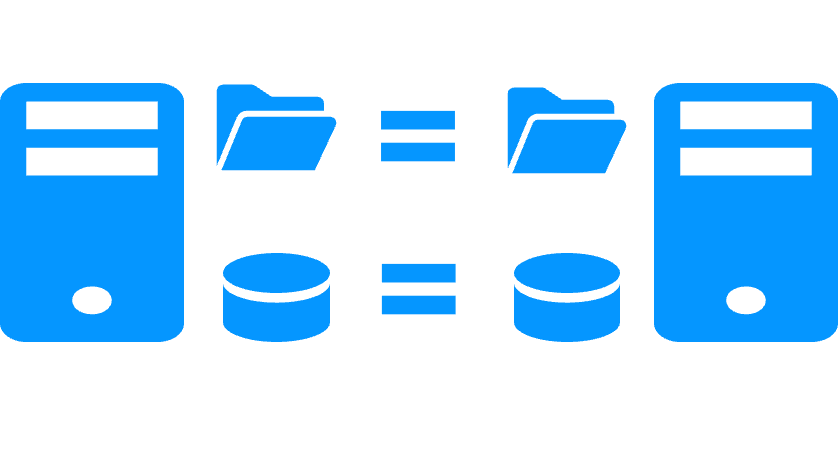

Replication of any type of data More info >  |

|

|

File replication vs disk replication More info >  |

|

|

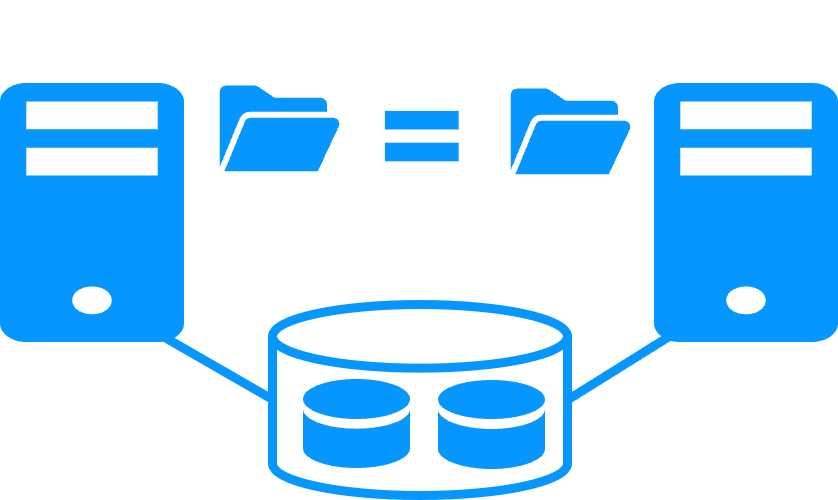

File replication vs shared disk More info >  |

|

|

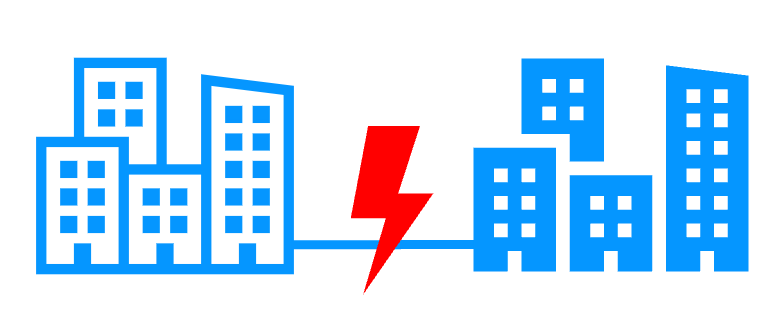

Remote sites and virtual IP address More info >  |

|

|

Quorum and split brain More info >  |

|

|

Active/active cluster More info >  |

|

|

Uniform high availability solution More info >  |

|

|

RTO / RPO More info >  |

|

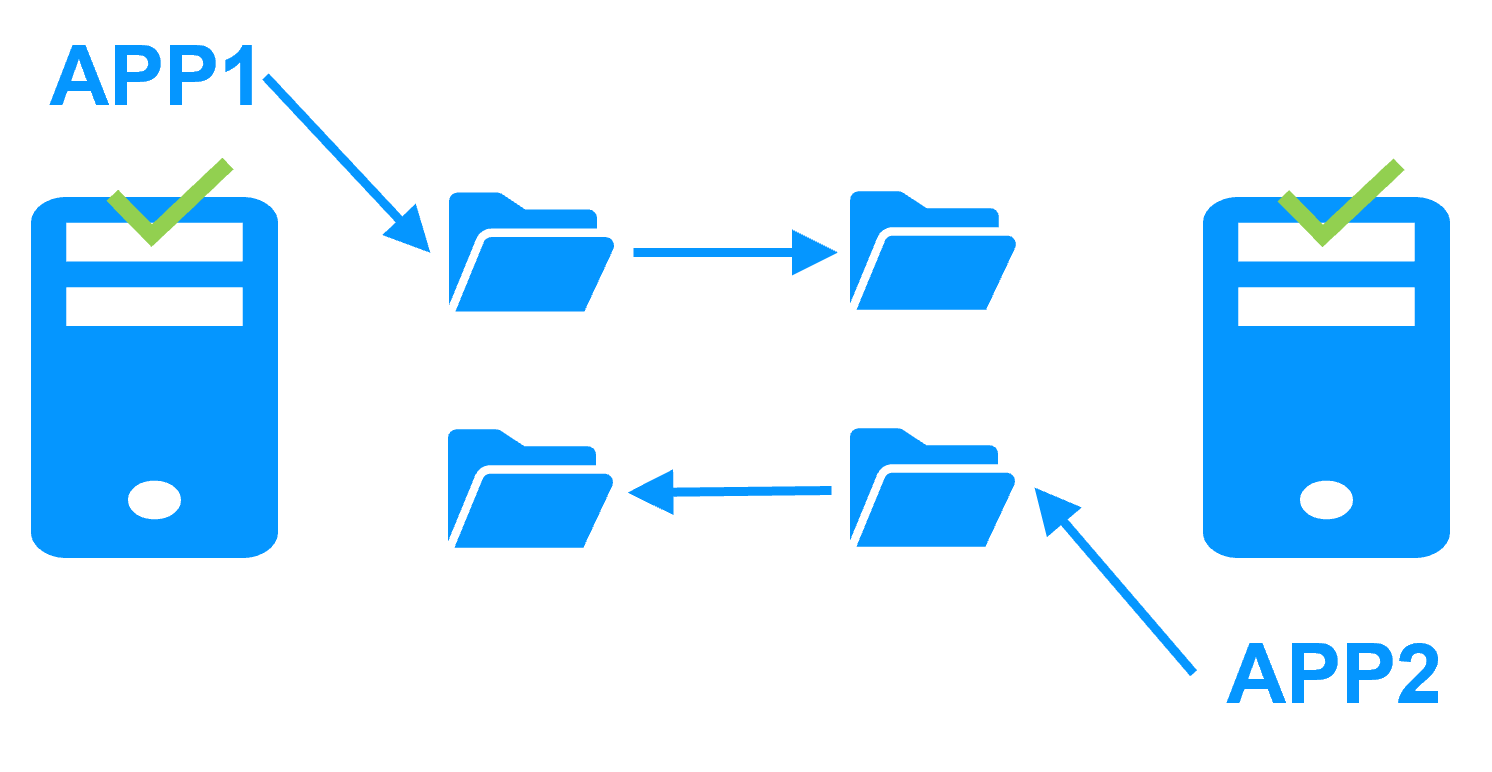

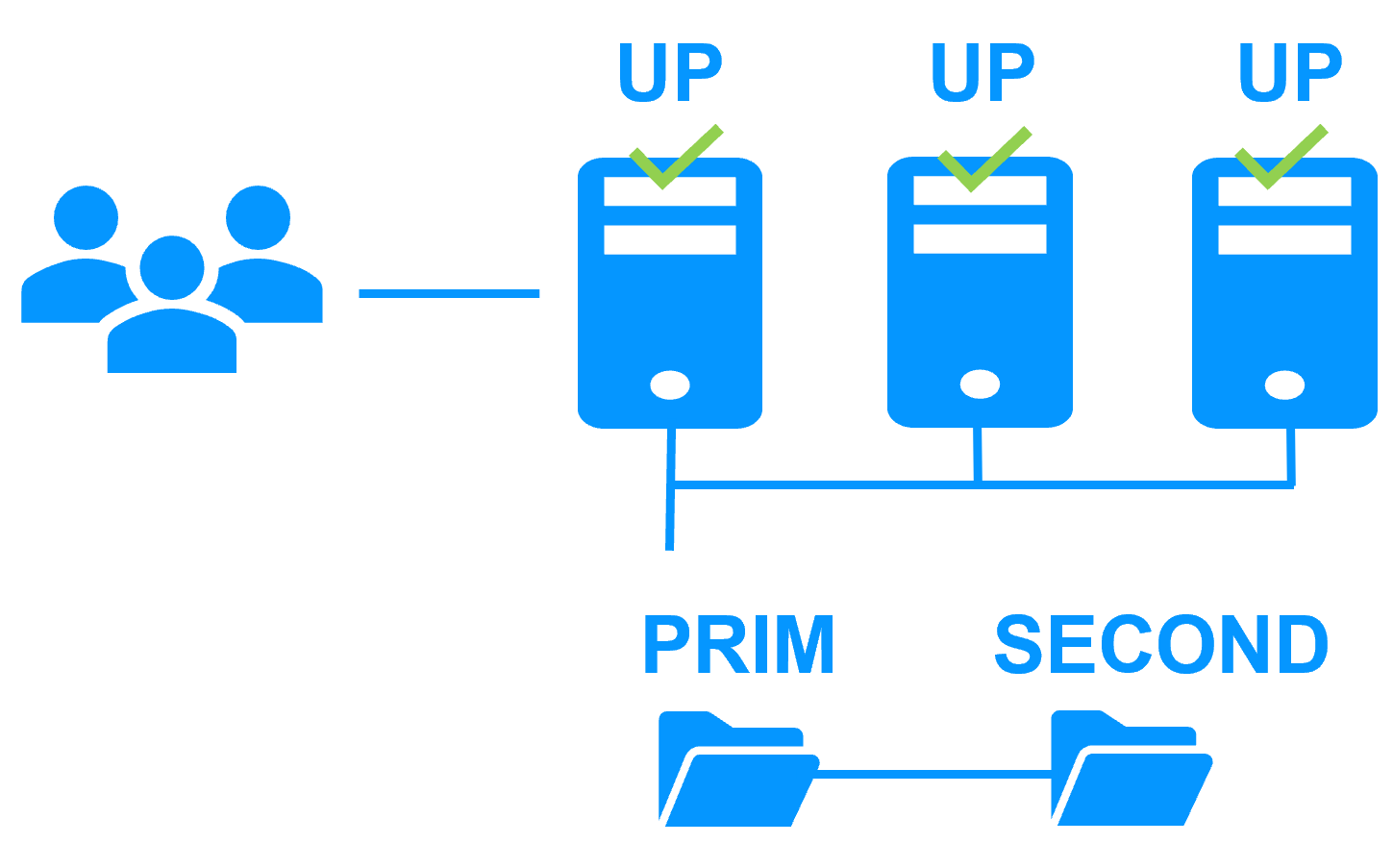

Evidian SafeKit farm cluster with load balancing and failover |

|

|

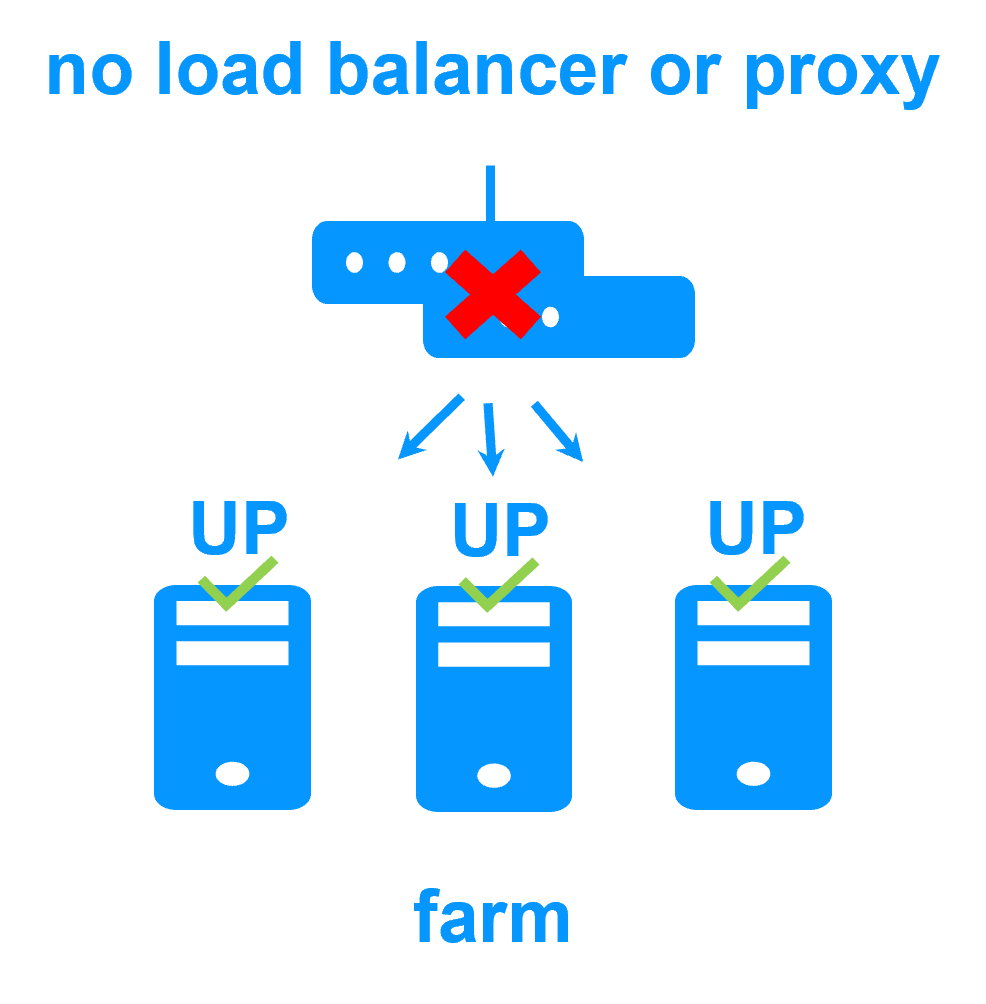

No load balancer or dedicated proxy servers or special multicast Ethernet address

|

|

|

All clustering features

|

|

|

Remote sites and virtual IP address

|

|

|

Uniform high availability solution

|

|

New application (real-time replication and failover)

- Windows (mirror.safe)

- Linux (mirror.safe)

New application (network load balancing and failover)

Database (real-time replication and failover)

- Microsoft SQL Server (sqlserver.safe)

- PostgreSQL (postgresql.safe)

- MySQL (mysql.safe)

- Oracle (oracle.safe)

- MariaDB (sqlserver.safe)

- Firebird (firebird.safe)

Web (network load balancing and failover)

- Apache (apache_farm.safe)

- IIS (iis_farm.safe)

- NGINX (farm.safe)

Full VM or container real-time replication and failover

- Hyper-V (hyperv.safe)

- KVM (kvm.safe)

- Docker (mirror.safe)

- Podman (mirror.safe)

- Kubernetes K3S (k3s.safe)

Amazon AWS

- AWS (mirror.safe)

- AWS (farm.safe)

Google GCP

- GCP (mirror.safe)

- GCP (farm.safe)

Microsoft Azure

- Azure (mirror.safe)

- Azure (farm.safe)

Other clouds

- All Cloud Solutions

- Generic (mirror.safe)

- Generic (farm.safe)

Physical security (real-time replication and failover)

- Milestone XProtect (milestone.safe)

- Nedap AEOS (nedap.safe)

- Genetec SQL Server (sqlserver.safe)

- Bosch AMS (hyperv.safe)

- Bosch BIS (hyperv.safe)

- Bosch BVMS (hyperv.safe)

- Hanwha Vision (hyperv.safe)

- Hanwha Wisenet (hyperv.safe)

Siemens (real-time replication and failover)

- Siemens Siveillance suite (hyperv.safe)

- Siemens Desigo CC (hyperv.safe)

- Siemens Siveillance VMS (SiveillanceVMS.safe)

- Siemens SiPass (hyperv.safe)

- Siemens SIPORT (hyperv.safe)

- Siemens SIMATIC PCS 7 (hyperv.safe)

- Siemens SIMATIC WinCC (hyperv.safe)